As machine learning systems become more ubiquitous, we should identify which kinds of bias in machine learning affect how people of racial, cultural, and sexual minorities access financial products and information.

There are significant benefits to machine learning-based applications. However, developers, researchers, and experts must also look at what it means to use such tools ethically. Just as an example of critical financial services, biases may affect how specific populations access loans, credit, and much more.

In this blog post, we’ll go over the main machine learning biases, what they are, which are the ones fintechs can face, and how developers can avoid affecting sensitive populations in financial products.

What is bias in machine learning?

To assume or not to assume?

In machine learning, bias results from events that occur due to faulty assumptions. Some of these faults are fairly benign and go unnoticed. However, others may have disastrous consequences. An algorithm can make life-changing decisions on one or more individuals, favoring people from certain ethnicities or classes over others.

There’s a catch to this. You can’t eliminate assumptions out of algorithms. Without them, a task would perform no better than if chosen as random. The issue here is not to eliminate bias; because certain biases are inherent to the system.

We need to learn to understand which assumptions help us model and classify data accurately, and which ones have a negative impact on specific populations.

What kind of biases are there?

Here’s a brief list of the many types of biases or actions that negatively affect machine learning models.

Selection and sampling

A selection bias occurs when the training data is not large or representative enough. Since every model must be trained on a training set, first, developers must ensure sufficient training data. Sampling biases related to selection bias refer to any sampling method that fails to attain true data randomization.

Outliers

Outliers are points of extreme value outside of what’s expected. Their existence is not inherently wrong, but including them within a machine learning model can generate skewed results.

Overfitting and underfitting

While overfitting and underfitting are possible consequences of a selection bias, underfitting happens when there’s little data to build an accurate model. Overfitting, on the other hand, happens when the model’s trained on a data set large enough to include too many details and noise.

Exclusion bias

Not every item in a data set is valuable. In pre-processing, analysts usually eliminate null values, outliers, or any data points that may be considered irrelevant. However, in doing so, they may end up removing more information than required, which affects the true accuracy of the collected data.

There are many more biases available. Biases that discriminate on illegal grounds, such as racial biases or the list mentioned above, profoundly affect sensitive populations. Productive biases, on the other hand, are inherent to the model. It is up to the data analyst or development team to strive for a delicate balance to prevent illegal or unfair discrimination.

How does bias in machine learning occur?

Algorithms can have a disparate impact on sensitive populations.

One of the classic examples used to understand how bias in machine learning occurs is the COMPAS (or Correctional Offender Management Profiling for Alternative Sanctions) algorithm, which is intended to predict the likeliness of a criminal reoffending.

Unfortunately, black defendants are almost twice as likely to be misclassified because of the models and datasets being used. ProPublica concluded that a random sample of untrained users yielded better results than the algorithm itself.

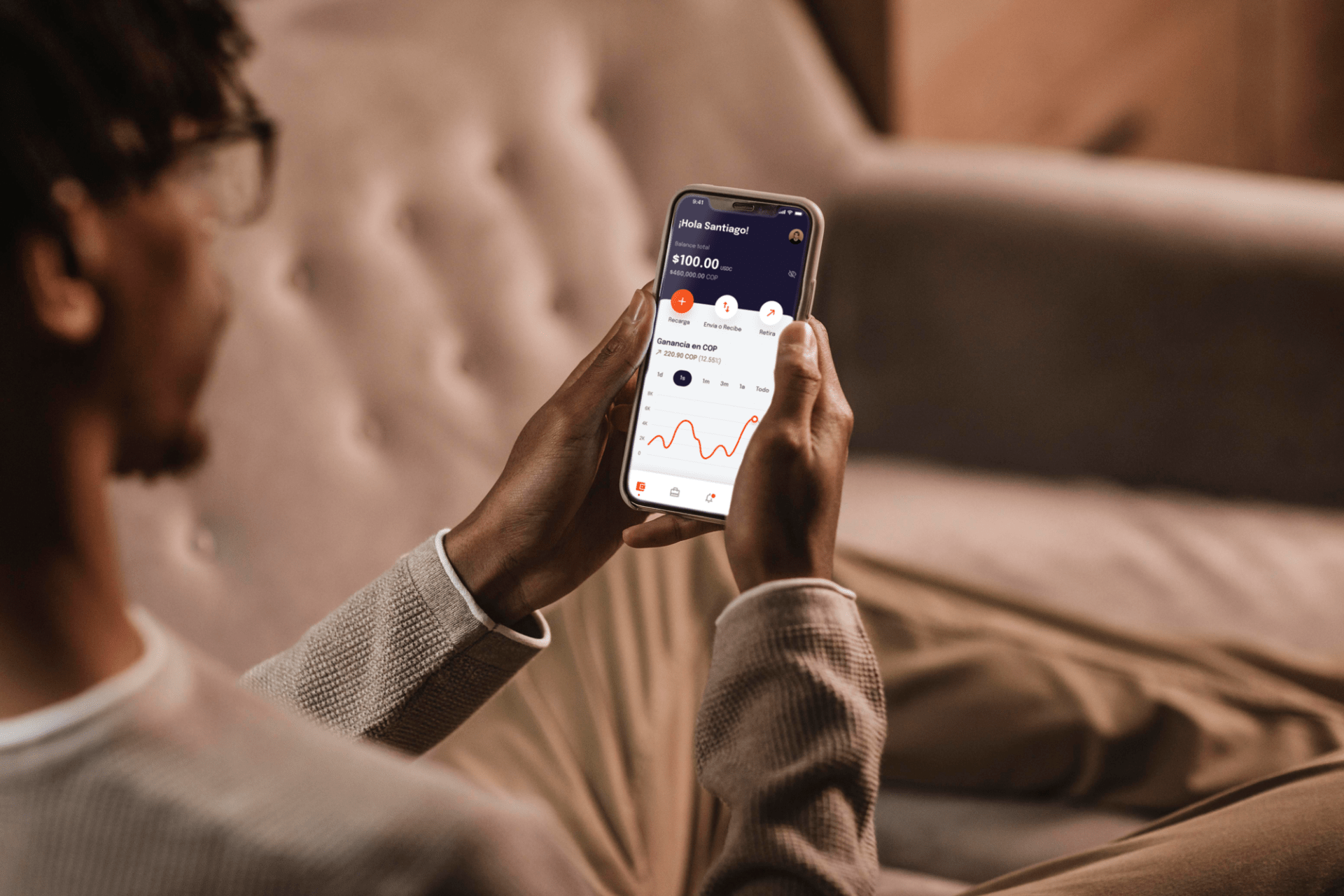

In finance, most credit score systems have a disparate impact on people and communities of color. Moreover, changing such an algorithm is incredibly complicated, as FICO (also known as Fair Isaac Corporation score or FICO score) credit scores are one of the most critical factors used by the financial ecosystem. In fact, a series of metrics including income, credit scores, and data used by credit reporting bureaus have a profound correlation with race. In a report by the Brookings Institution regarding FICO scores, white homebuyers have an average credit score 57 points higher than black homebuyers, and 33 points higher than hispanic/ latino ones.

To all this, how can developers navigate through new methods to prevent existing biases from occurring? The answer lies in understanding the kind of biases developers must avoid and establishing a new working framework. Although examples like the ones mentioned above are quite abundant, making non-biased algorithms is hard. First, engineers creating the algorithms must ensure they do not leak any personal biases onto their models.

Want to explore more in-depth this and other challenges in the future of fintech? Click here to read our newest white paper.

What can developers do?

The implementation of new technologies in finance faces a significant challenge.

If what some futurists say is correct, financial markets will soon start depending on increasingly sophisticated machine learning-based analysis. Because of this, addressing bias should be a primordial concern for data scientists and developers. Minimizing bias is a conscious practice involving different types of efforts, from the team members who create the models to how we process data.

Preventing bias on the grounds of race has been widely discussed by well-known data scientists, such as Meredith Broussard. In her book Artificial Unintelligence, she argues that eliminating such biases requires diversity. A racially and culturally diverse team provides different perspectives that provide valuable input when tackling sensitive issues.

What are the benefits of workplace diversity? Click here to read all about it.

Teams should also enforce some sort of data governance. This is the understanding, on behalf of individuals and companies, that they are socially responsible for the algorithms they create. One way of asserting data governance is to create a range of questions that may arise throughout the development process.

Each set of questions is unique and suited to the needs of each organization. Incorporating aspects from other disciplines help create an effective ethics guide that may help teams navigate through challenging dilemmas.

Advocating transparency

Promoting transparency and dialogue when creating and calibrating models ensures better technology. In the case of fintech, “better” means more financially inclusive products with a greater ability to adapt to an ever-changing market while protecting the rights of thousands of users.

Computer scientist Melanie Mitchell thinks we shouldn’t worry about robots but how people use artificial intelligence in everyday technologies. Because analyzing data using machine learning algorithms is currently more helpful than, let’s say, having a robot puppy, creating better conditions for the development of such technologies will benefit us all.

Was this article insightful? Then don’t forget to look at other blog posts and follow us on LinkedIn, Twitter, Facebook, and Instagram.