After just a few months since the world encountered ChatGPT, it’s clear: large language models (LLMs) have the power to transform productivity in operations and beyond.

But public models like ChatGPT can pose major risks for enterprises, especially those managing consumer data exposure in regulated industries. And as regulation around AI ramps up in the coming months and years, companies will want to ensure they’re investing in future-proof AI solutions — especially in highly-regulated industries where data security and robust cybersecurity infrastructure are crucial.

That’s what makes building private AI models a powerful tool for balancing security with the efficiency gains of LLMs. Private language models are custom AI solutions trained on a company’s data for domain-specific tasks. Find out more about how leaders can develop tailored AI tools that deliver on productivity, efficiency, and cost optimization — without the risk.

From LLMs to generative AI, financial services is moving forward with AI

Leaders across financial services are starting to define what success with next-generation AI could look like.

According to NVIDIA’s State of AI in Financial Services: 2023 Trends, it’s clear that financial services firms are not only pioneering AI technologies in large numbers this year — they’re seeing significant impacts.

Surveyed companies reported that:

- They’re piloting anywhere from 1-2 AI use cases (43% of respondents) to 6+ use cases (20%).

- Top use cases include natural language processing (NLP)/LLMs (26% of respondents), recommender systems (23%), and portfolio optimization (23%)

- Their top three operational improvements included better customer experiences (46% of respondents), improved efficiencies in operations (35%), and lowered total cost of ownership (20%)

AI pilots across the industry

Whether for asset management or retail banking, many FIs are looking to internal or private chat models as a key starting point for developing next-generation AI applications.

- ABN AMRO is using an AI pilot in call centers, helping agents identify product offerings and transcribe calls. (Medium)

- Morgan Stanley implemented a private instance of OpenAI’s LLM for wealth management solutions, currently in use and being refined by its financial advisors. (The Wall Street Journal)

- Abrdn is leveraging an internal generative AI tool to assist with drafting and reporting, including investment report creation. (Financial News, FN)

- Schroders launched its internal AI tool, Genie, to automate client reporting and develop financial advice. (FN)

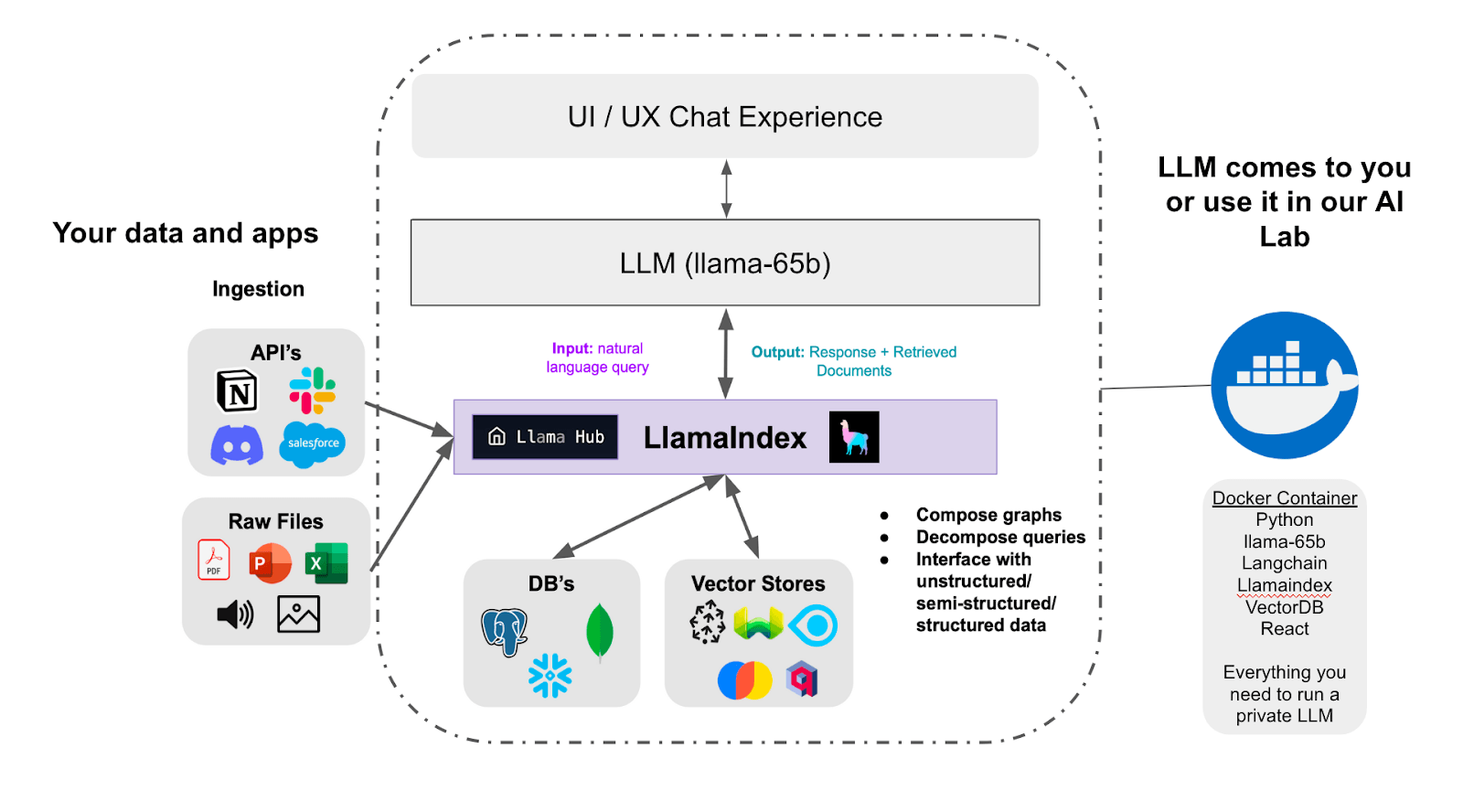

Through AI Labs at Blankfactor, we’re now testing and deploying open-source LLMs — both locally and with out-of-the-box solutions from providers like OpenAI and Microsoft — to help companies identify the LLM pathway best suited for their needs and develop private chat capabilities.

Building private AI models

The potential gains of leveraging private chat models are just as crucial as guardrailing AI experimentation and innovation. In an effort to limit company data exposure to public chat models like ChatGPT, companies experimenting with AI are working to balance guardrailing with development approaches. They may be placing restrictions on the use of ChatGPT by employees, for instance, or limiting AI pilot testing to select business units or focused innovation teams.

But organizations may struggle to move beyond the experimentation phase and effectively scale AI models. They may face internal barriers to develop AI in-house, or may face limitations with their data and AI talent. Many companies may also lack a clear roadmap for secure AI implementation.

That’s where building private AI models comes into play, and can help ensure that companies both scale AI effectively and bring sensitive data to AI models securely. With private chat models, companies can bring their own proprietary data sets to a pre-trained language model, like LLaMA 2 from Meta or Megatron-Turing NLG from Microsoft and NVIDIA.

While many of these LLMs are open source, that doesn’t mean open access to your data. By developing a private instance of AI. Your data remains self-contained within your hosted environment, either on prem or in the cloud. Data and chat capabilities are available only to users in your organization who have access or customers with verified accounts.

Use cases for private chat in financial services

Whether it’s for internal team use or to enhance the customer experience, it’s possible to create private chat models tailored to the specific tasks and capabilities most needed in financial services.

Use case ideation is the first step in mapping out an AI development blueprint, as it’s crucial to identify the most impactful use cases tied to a broader business strategy. From there, developing a tailored language model requires selecting the language model best suited for your applications. Then, data engineers will need to create a new task-specific layer within the selected LLM, then identify and collect relevant proprietary data sets needed to train the model to execute domain-specific tasks.

Here are just a few use cases we’ve seen FIs starting to execute.

Payments and card networks

- Next-best-action systems for card services, from issuance to replacement

- Chargeback management and dispute resolution

- Query handling for consumers and merchants

Banking

- Agent support systems to enhance customer service operations, from call recording to product summary solutions

- Recommender systems for personalized product offers and services

- Query handling for consumers and merchants

Capital markets

- Generative models for client reporting and asset management productivity

- Tailored dealmaking recommender systems

- Seamless market data research and insights

Training private AI models with domain-specific data sets

General-purpose foundation models, like Open AI’s GPT4, are trained on hundreds of billions of words and over 570 GB of data. They’re designed to execute general tasks, from generating instructions for dinner to fixing bugs in code.

But your private instance of AI can be trained on deep-but-narrow sets of proprietary data, enabling domain-specific task functions. From transaction data to customer queries and behavioral data, nearly any historical data can be used to train a model and build generative AI apps.

Companies can also leverage publicly-available, domain-specific datasets to supplement and enhance derivable insights, including SEC and market data, credit bureau and consumer reporting data, or other third-party available in marketplaces like Snowflake’s Data Cloud.

Model development and AIOps

Secure innovation with AI technologies is crucial across the entire AIOps lifecycle and post-launch, which will entail:

Development stage

- Establishing a secure data training environment

- Selecting a secure LLM platform and model

- Identifying relevant data features and suitability for AI development

- Ensuring secure data ingest, storage, processing, and access

- Anonymizing and masking personally identifiable information (PII)

- Ensuring compliance with domain-specific regulatory requirements, from KYC/AML to the Truth in Lending Act

Testing & validation

- Assessing model performance, accuracy, and compliance with regulatory standards

- Fine-tuning to optimize performance

- Determining and addressing security flaws

- Deploying the model on secure servers and establishing access controls

Post-launch stage

- Establishing governance and guardrails for internal LLM use

- Training teams to ensure ongoing compliance and governance frameworks

What leaders are asking about building private AI models

Companies exploring opportunities with private chat models will want to work with data engineers and AI experts to ensure you’re executing securely and effectively. But for firms that lack sufficient in-house teams and talent, you’ll likely need to navigate through a host of considerations with a trusted partner.

At Blankfactor, our experts at AI Labs have led major AI initiatives for enterprises in financial services in beyond. Our team will work with you to strategize an AI roadmap in line with your unique needs and business goals. We can work through your questions and deliver the right solutions.

Should enterprises build their own LLM?

Usually, you don’t need to create an LLM from scratch — an endeavor of substantial cost and resources. We advise that the majority of low-hanging fruit to be captured through AI development is in building AI applications, not LLMs. We’re now seeing a large selection of open source (OSS) LLMs – like Meta’s LLaMA 2, which takes much of the unnecessary sweat equity out of the equation.

While some enterprises may invest in building language models from scratch, particularly if they’re looking to market an original enterprise AI platform or solution (like BloombergGPT), most of the value to be gained from AI technologies can be leveraged through AI application development with pre-trained models or machine learning models.

What security features can companies expect with a pre-trained model, like Meta’s LLaMA 2?

As mentioned, your data will not be shared with open-source platforms like LLaMA 2. Developing private chat models ensures that your data doesn’t travel outside of your own secure infrastructure, whether it’s Snowflake, Databricks, or other cloud environment.

While each open-source LLM takes its own approach to security, these platforms prioritize safety feature development. For instance, the team behind LLaMA 2 takes a red teaming approach, simulating cyberattacks to identify vulnerabilities. The model has also been benchmarked for quality and accuracy with datasets like ToxiGen and TruthfulQA.

Building a private instance of AI enables companies to develop fully custom data security frameworks and cybersecurity infrastructure. From establishing secure firewalls and intrusion detection systems to data masking and anonymization, enterprises can take a host of measures to ensure that customer and company data remains protected.

Unlock the potential of private chat with Blankfactor’s AI Labs

Developing private chat models can unlock a new level of productivity and growth for your oranziation or targeted lines of business. But to be successful, companies need to proceed with a clear AI strategy, a path to value, guidance from AI experts, and plans in place for security and governance.

AI Labs at Blankfactor offers a solution to companies ready to explore and implement game-changing AI-powered technologies. From secure private chat models that can turbocharge team efficiencies to machine learning solutions that will unlock key business insights, we can help launch impactful AI solutions tailored to financial services and other regulated industries.

De-risk your innovation journey and see faster time to value. Contact our team for your 60-minute strategy session, where our experts can help you ideate solutions to begin building private AI models.